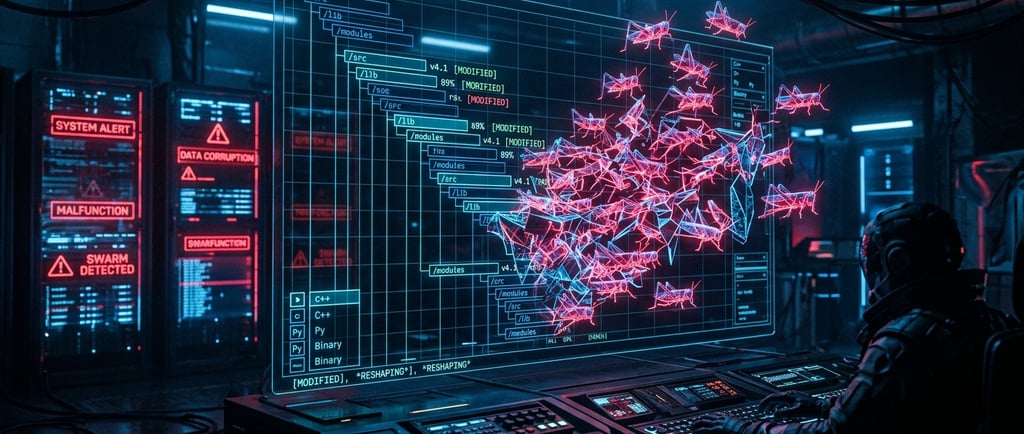

LLMs Unchained: Cybercriminals Outpace Scanners with Logic-Busting AI Malware

Active Race: AI-assisted zero-day discovery and malware engineering are happening in the wild right now.Defensive Blindspots: Traditional scanners miss the subtle logic errors found in AI-generated exploits.Evasion Scale: Malware families leverage custom LLM APIs for real-time code modification and obfuscation.Autonomous Execution: Backdoors send device UI structures directly to LLMs to simulate human interaction.Gateway Vulnerabilities: Compromised AI orchestration wrappers like LiteLLM expose critical enterprise cloud secrets.

TECHSYNTHESISLATEST

Anshumaan Bakshi

5/13/20262 min read

The traditional perimeter is dissolving under a wave of automated exploitation. Cybercriminals are no longer just experimenting with generative AI; they have operationalised it to bypass security controls at scale. The Google Threat Intelligence Group (GTIG) confirms that the AI vulnerability race is actively underway. Attackers are exploiting logic flaws and supply chains faster than defenders can patch them.

The Structural Friction: Scanners vs. Semantic Errors

Existing security architectures rely heavily on static signatures and pattern matching. This approach fails against AI-driven tactics:

Signature Scanners Blinded: AI obfuscation engines like CANFAIL and LONGSTREAM generate unique decoy code to mask malicious payloads.

Logic Over Memory Flaws: Automated exploits target hard-coded trust assumptions rather than traditional memory corruption vulnerabilities.

Autonomous UI Control: Backdoors like PROMPTSPY map UI hierarchies via Accessibility APIs to execute live on-screen gestures.

Zero-Day Pipeline Acceleration: Criminal actors use structured Python scripting and hallucinated CVSS frameworks to spin up mass operations.

The Technical Reality: Operational Relay Networks and AI Supply Chains

State-linked actors are pushing the computational limits of threat delivery. China's APT27 uses LLMs to manage complex fleet networks, routing attacks through mobile and router proxies to acquire residential IPs.

Concurrently, the focus has shifted toward the open-source AI supply chain. Orchestration wrappers, API connectors, and skill configuration files present high-value targets. The recent TeamPCP supply chain compromises targeting platforms like LiteLLM highlight a new vector. By embedding credential stealers in build environments, threat actors exfiltrate cloud secrets and LLM API tokens, converting AI gateways into initial access points.

TL;DR Version

Active Race: AI-assisted zero-day discovery and malware engineering are happening in the wild right now.

Defensive Blindspots: Traditional scanners miss the subtle logic errors found in AI-generated exploits.

Evasion Scale: Malware families leverage custom LLM APIs for real-time code modification and obfuscation.

Autonomous Execution: Backdoors send device UI structures directly to LLMs to simulate human interaction.

Gateway Vulnerabilities: Compromised AI orchestration wrappers like LiteLLM expose critical enterprise cloud secrets.

The Verdict

Defenders must abandon purely reactive patching cycles. As threat actors automate reconnaissance and exploit delivery, engineering teams must deploy autonomous defensive agents like Big Sleep and CodeMender to actively hunt bugs before compilation. Securing the AI orchestration layer is now a tier-one operational priority.

Thank you for reading AB Tech Insights Weekly. For feedback or inquiries, reach out at reach@anshumaanbakshi.com.

Connect

Explore my services and portfolio for growth.

Inspire

Create

+91 78278 45113

© 2026. All rights reserved.

Like this website ?? Own a similar one! Click here to learn more